Decoding Gemini 3’s Multimodal Video Logic: From Pixels to Temporal Reasoning

Insights

5 mins read

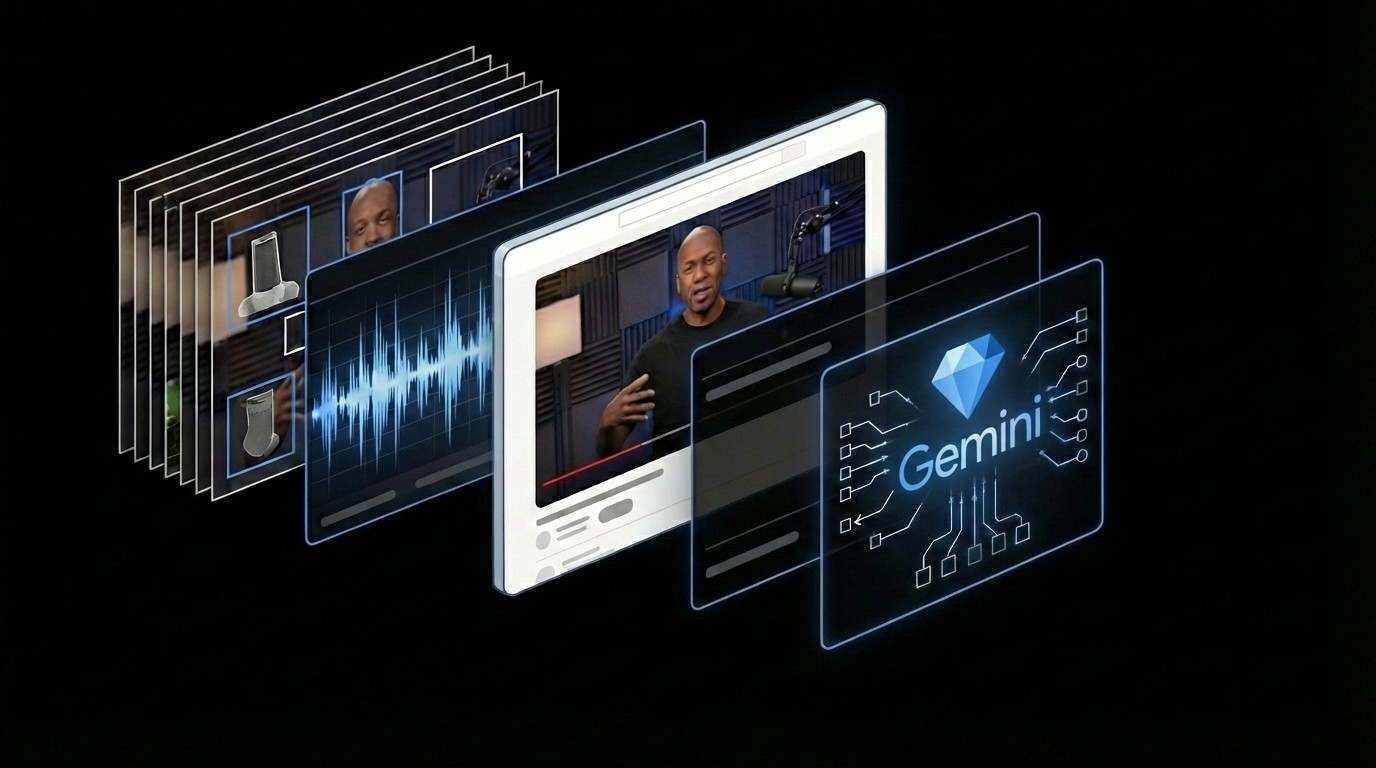

Gemini 3 Pro represents a paradigm shift from frame-by-frame analysis to native temporal reasoning.

As of February 2026, Gemini 3 Pro has established a new gold standard with its 1.04-million-token context window. Unlike competing models that rely on "frame sampling"—effectively skipping chunks of data to save compute—Gemini 3 processes video as a native multimodal stream. This means the model holds up to an hour of high-resolution video in its active "short-term memory" simultaneously.

This differentiation is critical for Temporal Causality. In earlier iterations, AI might identify a "running dog" as a static label.

Gemini 3, however, uses its vast context to understand the why: it sees the ball thrown at 00:05, the dog’s anticipatory muscle tension at 00:10, and the successful retrieval at 00:15. It perceives the entire narrative arc as a single data point.

For marketers, this eliminates the "needle in the haystack" problem. Whether it’s a 60-minute technical deep-dive or a 15-second Short, Gemini 3 utilizes the thinking_level parameter to cross-reference visual cues with audio and OCR text in real-time.

With "Deep Thinking", the model is cross-referencing visual cues with audio transcripts and on-screen text (OCR) to derive high-level sentiment and strategic intent.

So that means Gemini isn't just seeing your video—it's understanding your brand's narrative structure.

This allows the model to recognize brand intent and product efficacy that sampling-based models would simply miss. By moving beyond "looking" to "reasoning," Gemini 3 ensures that every second of your content contributes to its internal model of your brand.

Similar Topic